It is well known that for a long time African American women have been whitening their skin. To me this is awful because I don't think you should have to change colors to be considered 'beautiful' by society.

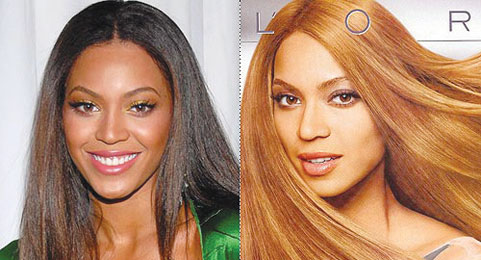

You can tell very easily that many celebrities have whitened their skin. Whether they do it intentionally or by digitally enhancement I am not sure. Either way it is just a terrible thing to want so much to change yourself. What message is this sending to young African American girls? That they cannot be pretty unless their skin and hair is lighter.